Audit Logs

Note: Audit logs are only available in the Scalr enterprise tier .

Scalr audit logs provide a comprehensive, immutable record of all user and system activities within your environments, tracking everything from workspace changes to user authentication. This detailed logging ensures you can meet security requirements, demonstrate compliance, and maintain full traceability over your infrastructure operations. Scalr currently supports the streaming of logs to monitoring and event platforms (Datadog and EventBridge) in real time or 24-hour batch delivery to cloud storage (AWS, GCP, Azure)

Monitoring & Event Platforms

Scalr can stream audit log events in real time to Datadog or Amazon EventBridge. Events are delivered as they occur and are available immediately in Datadog for monitoring and alerting, or routed through your AWS event bus to any downstream target such as Lambda, SQS, or third-party SaaS integrations. This makes both integrations well-suited for organizations that need live visibility into user activity or want to incorporate Scalr audit events into existing observability and automation pipelines.

Datadog

The audit log feature can use the same Datadog connection that is used for events or a new one can be created.

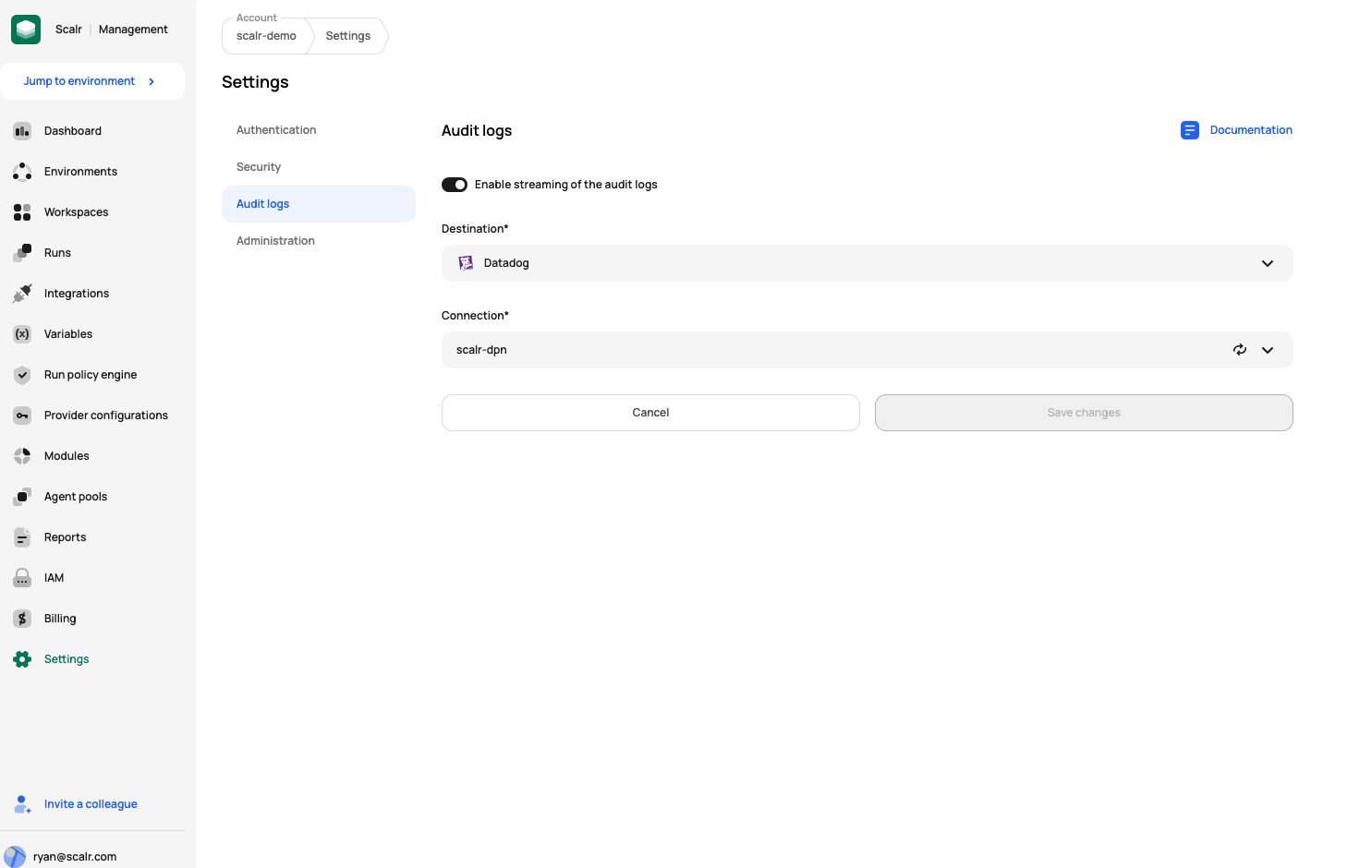

To enable audit logs, go to the account settings page, click on security, and click on audit logs. Enable the streaming of logs, select Datadog as the destination, and add the connection:

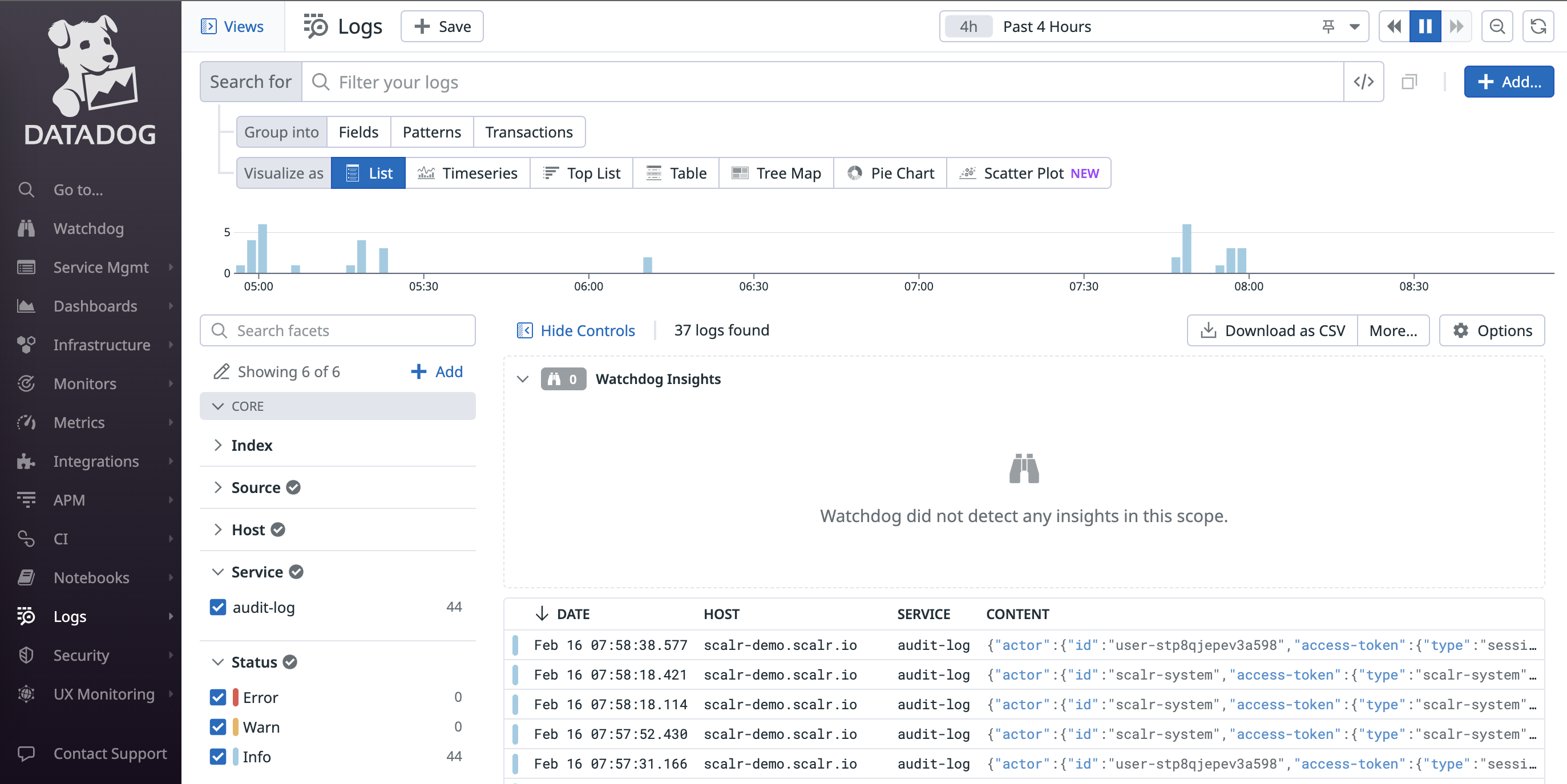

You will now see the Scalr logs in the Datadog logs feature:

Here is an example of a full log output:

{

"id": "AgAAAY2x_1pxzx914QAAAAAAAAAYAAAAAEFZMnhfMXRKQUFDY1RCNUtZb3FoTlFBQQAAACQ12345656E4ZGIxZmYtNWE3MS00MTU3LWJlMTctODhmMTZhYTU5Nzhl",

"content": {

"timestamp": "2024-02-16T12:58:38.577Z",

"tags": [

"scalr-workspace-name:demo-ws",

"scalr-environment-name:cs-m",

"scalr-workspace:ws-v0o370ouv5kbjmk9h",

"scalr-user-email:[email protected]",

"scalr-action:discard-run",

"scalr-environment:org-sscctbkdgkdr123",

"source:scalr",

"datadog.submission_auth:private_api_key"

],

"host": "docs.scalr.io",

"service": "audit-log",

"attributes": {

"actor": {

"id": "user-stp8qjepev3a123",

"access-token": {

"type": "session",

"token": "...B6wifA"

},

"type": "user",

"email": "[email protected]"

},

"request": {

"ip-address": "69.206.111.123",

"action": "discard-run",

"id": "a3a473d725c730382558c25d52bd1234",

"source": "ui",

"user-agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/121.0.0.0 Safari/537.36"

},

"hostname": "docs.scalr.io",

"service": "audit-log",

"outcome": {

"result": "SUCCESS",

"status-code": 202

},

"target": {

"display-name": "run-v0o370pcff0e53123",

"context": {

"environment": {

"display-name": "CS-M",

"id": "org-sscctbkdgkdrqg0"

},

"workspace": {

"display-name": "demo-ws",

"id": "ws-v0o370ouv5kbjmk9h"

},

"account": {

"display-name": "docs",

"id": "acc-sscctbisjkl3123"

}

},

"id": "run-v0o370pcff0e53",

"type": "runs"

},

"timestamp": "2024-02-16T12:58:38.577846"

}

}

}AWS EventBridge

The audit logs can also be streamed into the AWS EventBridge service, allowing you to forward logs to any logging service like CloudWatch, New Relic, Sumo Logic, etc. Follow the AWS EventBridge documentation to configure it and enable the log streaming.

Once the EventBridge integration is done, follow the instructions at the top of this page to enable it as the audit logs destination.

Demo

The following demo shows how to configure audit logs for Datadog or AWS EventBridge:

Cloud Storage

Scalr can deliver audit log events to a customer-owned cloud storage bucket in AWS (S3), GCP (Cloud Storage), or Azure Blob Storage. Events are exported once every 24 hours in JSON format and written as immutable, append-only objects; existing files are never modified or deleted by Scalr. This integration is suited for organizations that require long-term retention under their own data control, such as those using S3 Glacier or equivalent cold storage tiers for cost-effective archival.

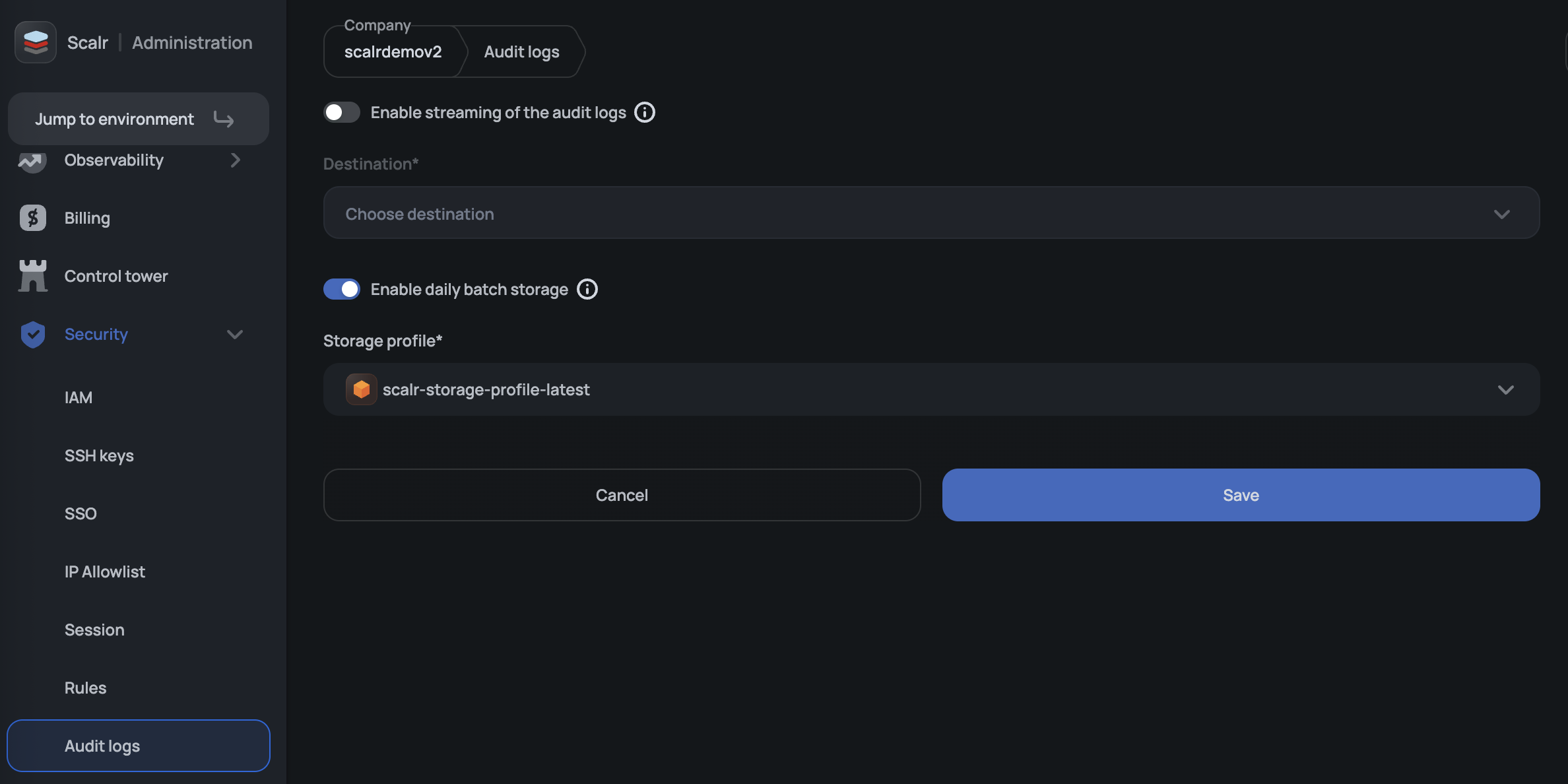

To enable: Navigate to Audit Logs and select a storage profile. You will need an existing storage profile configured with credentials for your target bucket.

Updated 2 months ago