General FAQ

What is a run?

There are two types of runs in Scalr:

- A standard run is one that actually runs a Terraform apply.

- A dry run, or speculative run, is one that executes the plan, cost estimation, and policy phases, but not the apply.

Which runs do not count against billing?

Some runs are not counted against the runs you're billed for. At a high level, the following are not billed for:

- Runs triggered by the drift detector.

- Runs that use local execution mode in the workspace.

- Runs that fail during

terraform initdue to:- Missing folder or var file

- Terraform code parsing errors

- Connectivity errors (registries, docker, etc)

- Broken provider configs errors

- Runs that violate a pre-plan OPA policy.

- Runs that violate a pre-plan Checkov policy.

- Runs that fail during the cost-estimate phase.

- Runs failed due to an internal Scalr error.

What are the quotas on Scalr objects?

All objects in Scalr have a quota to avoid abuse of the system. Any quota can be increased free of charge by opening a support ticket at support.scalr.com and providing the use case for the increase. The quotas for the business tier are as follows:

| Object | Default Quota |

|---|---|

| Workspaces | 5,000 |

| Users | 1,000 |

| Environments | 500 |

| VCS Providers | 5 |

| Policy Groups | 20 |

| Run Triggers | 500 |

| Agents | 25 |

| Modules | 100 |

How long is state and run information retained in Scalr?

The following retention policy applies to runs:

- Run history for all applies will be kept for 3 years from the run date. All older runs will be deleted, except if there are 10 runs or less in a workspace.

- Run history for all plan-only runs will be kept for 30 days from the run date. All older runs will be deleted.

The following retention policy applies to state files:

- Scalr will retain the last 100 state files per workspace. All older state files will be deleted, except if there are 10 state files or less in a workspace.

What are the Scalr IPs to whitelist?

The IPs that will need to be whitelisted for scalr.io are listed in this API endpoint .

Also, you can automate the process with Terraform by adding a code snippet below to your template:

data "http" "ips-allowlist" {

url = "https://scalr.io/.well-known/allowlist.txt"

}

locals {

ips_allowlist = split("\n", trimspace(data.http.ips-allowlist.body_response))

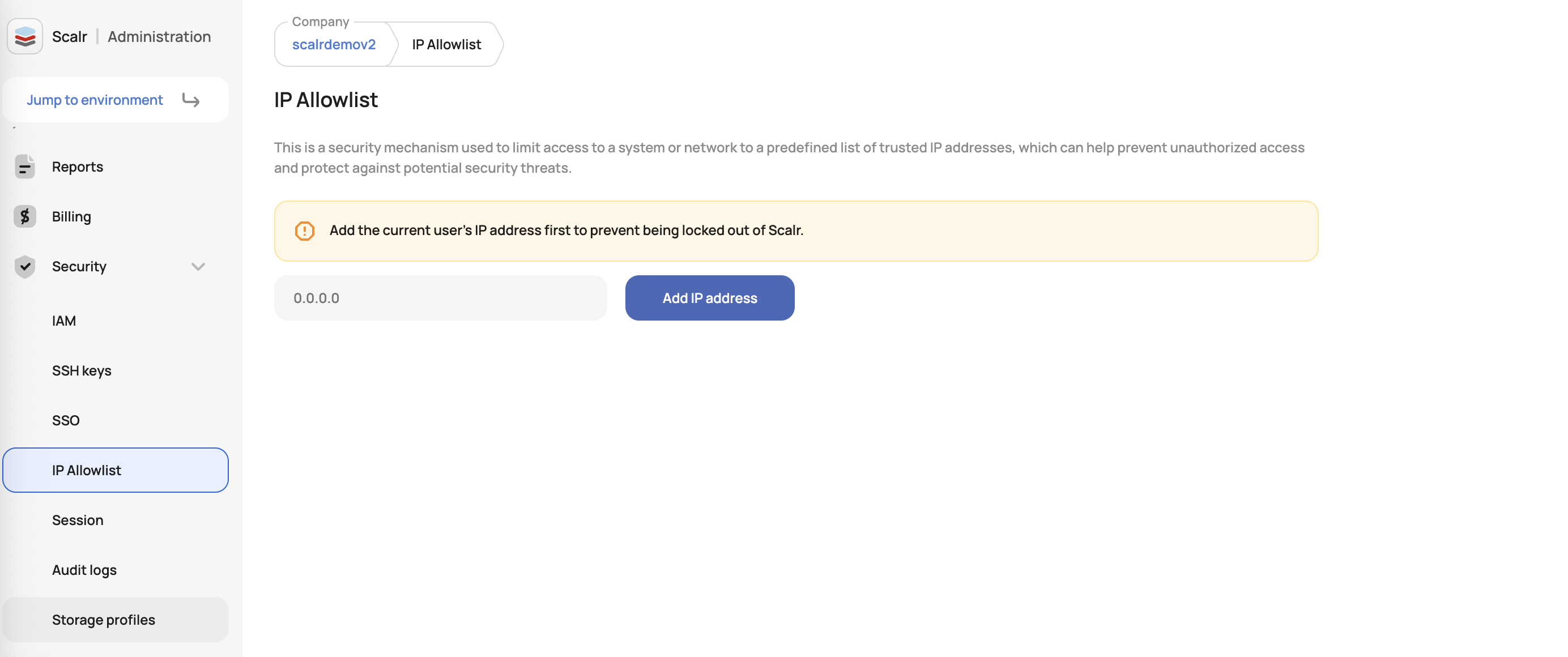

}Can I fence an account?

Yes, to improve security, users have the ability to allow only specific public IPs/CIDRs to access their Scalr account. If the request issuer is out of the approved list, the request will be blocked. This is available in the account-> security settings:

How is my Terraform or OpenTofu state stored?

If a Terraform run is executed within Scalr then the resulting Terraform state file is stored within Scalr. All state files are securely stored in a Scalr-owned and encrypted Google Cloud bucket. Optionally, you can store your state file in your own GCP bucket if desired. Find out more here.

What are Scalr environments?

Environments are the logical grouping of workspaces, teams, policies, and other objects that relate to each other. The majority of the time, we see an environment being the equivalent of an app or team. Generally, there are a few questions that should be answered when thinking of your structure:

- Will I need different policies applied to any of my workspaces within the environment?

- Will the same provider credentials be used across all workspaces?

- Will all users/teams within the environment collaborate on these workspaces?

- Will all workspaces use the VCS provider(s) assigned to this environment?

If you answered yes to any of those questions, you may consider breaking out components into separate environments.

Are Provider Configurations required in Scalr?

Provider configurations are required for Terraform runs to execute, but you decide where configurations are actually stored. Scalr.io provides the ability to store encrypted Provider Configurations and will automatically pass them to runs. Some business requirements, like a Business Associate Agreement (BAA), require that the configurations are not stored with a SaaS vendor and Scalr can accommodate this as well.

For businesses who must store the configurations outside of Scalr, you have the option of using the Self Hosted Agent Pools, which provides flexibility in where the configurations are stored. Agents are placed in the network of your choice, and scalr.io will never have a connection to the agent; the agent only pulls information from scalr.io and executes Terraform runs accordingly. Because provider configurations just need to be passed to the Terraform runs as shell variables, this gives you flexibility on how the configurations are actually supplied:

- Using automation to set it as an OS variable on the agent server.

- Pulled from a vault at the time of run execution with custom hooks.

- Inherited from the instance profile of the server that the agent is hosted on.

Any of these methods ensure that scalr.io never has access to your configurations and it is solely managed within your network.

How do I migrate or update Terraform state in Scalr?

Migrating state to Scalr is simply done by changing the Terraform configuration to use Scalr as a remote backend. Regardless of where the state is now (local, S3 bucket, etc) the Terraform CLI (terraform init) will detect the change of configuration and automatically migrate the state to Scalr.

- If there is an existing remote state then simply need to update the

terraform {}block to be similar to the example below - If the state is currently local then add the complete

terraform{}block similar to this example.

terraform {

backend "remote" {

hostname = "my-account.scalr.io"

organization = "env-ssccu6d5ch64lqg"

workspaces {

name = "migrate-demo"

}

}

}- Run terraform init to migrate the state and answer “yes” to the prompt. This will create the workspace and upload the state to Scalr.

Can Scalr use the Terraform credential helper?

Scalr supports credential helpers if you have a use case where it is needed. To use the helper remotely, it must be installed in the following location within the workspace: terraform.d/plugins/terraform-credentials-scalr.

For self-hosted customers, the helper can be added and configured on the Scalr worker server for global use in the following location: /opt/scalr-server/var/lib/tf-worker/plugins/terraform-credentials-scalr

Why do I need the Scalr hierarchy?

The Scalr hierarchical model is one of the key differentiators of Scalr that helps businesses of all sizes scale their Terraform usage. At Scalr, our goal is to help you centralize your Terraform administration and decentralize the Terraform operations. What does this mean:

Things that should be centralized in the account scope:

- IAM (RBAC, Teams, Users)

- OPA Policies

- Module Registry (Required modules)

- Cross Account Reporting

- Provider Credentials

- VCS providers

- Shared Variables

Things that should be decentralized at the environment scope:

- Workspaces

- Workspace orchestration and scheduling

- Run execution

- Module Registry (Team/App specific modules)

By centralizing the administration, the administrative team can ensure the developers are deploying their Terraform in a controlled manner while also having visibility over it. This then allows the developer teams to increase their velocity by managing their workflows within Scalr environments without interruption.

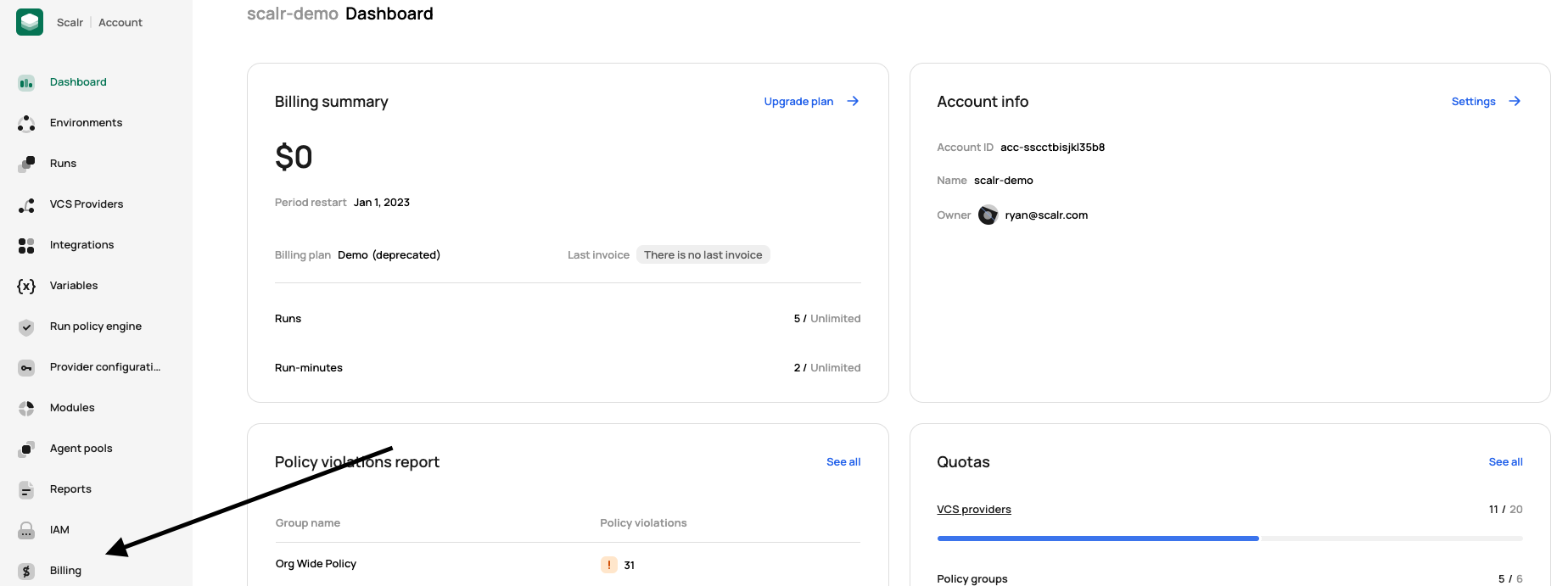

How do I get my invoice?

Invoices can be retrieved by your team through the Scalr UI by going to the account scope and clicking on the billing page:

Then go to invoices to view and download:

The accounts:billing permission is required to view and access the invoices.

If you do not have access to Scalr, please contact that account administrator who will be able to retrieve the invoice for you.

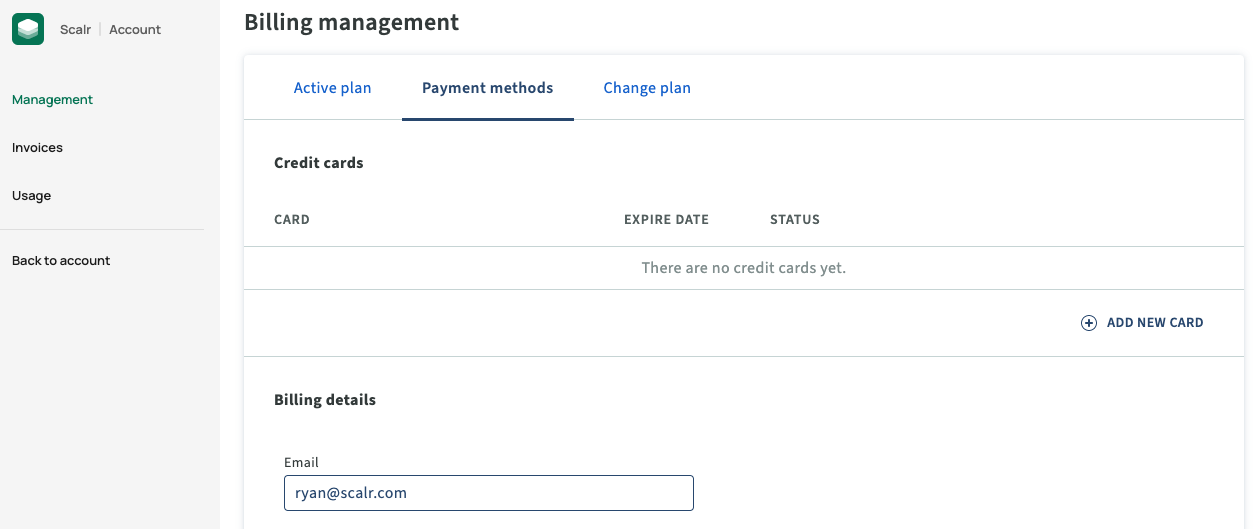

Where are my invoices sent?

Invoices are sent to the email address in the billing details section unless a ticket was opened and a different email was provided.

Where can I find Scalr's W9?

You can find the W9 for Scalr here: http://scalr.com/w9

Enable EPEL Repo for the Agent

Download the CentOS GPG Key

# Get OS Release number

OS_RELEASE=$(rpm -q --qf "%{VERSION}" $(rpm -q --whatprovides redhat-release))

OS_RELEASE_MAJOR=$(echo $OS_RELEASE | cut -d. -f1)

# Download the GPG key and save locally

curl -s https://www.centos.org/keys/RPM-GPG-KEY-CentOS-$OS_RELEASE_MAJOR | sudo tee /etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-$OS_RELEASE_MAJORCreate the Repo File

# Get OS Release number

OS_RELEASE=$(rpm -q --qf "%{VERSION}" $(rpm -q --whatprovides redhat-release))

OS_RELEASE_MAJOR=$(echo $OS_RELEASE | cut -d. -f1)

# Create the repo file

cat << EOF | sudo tee /etc/yum.repos.d/centos-extras.repo

#additional packages that may be useful

[extras]

name=CentOS-$OS_RELEASE_MAJOR - Extras

mirrorlist=http://mirrorlist.centos.org/?release=$OS_RELEASE_MAJOR&arch=\$basearch&repo=extras

#baseurl=http://mirror.centos.org/centos/$OS_RELEASE_MAJOR/extras/\$basearch/

enabled=1

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-$OS_RELEASE_MAJOR

priority=1

EOFBuild the Repo Cache

sudo yum -q makecache -y --disablerepo='*' --enablerepo='extras'Scalr Labs

"Scalr labs" features are new, in-progress features whose details may change over time. Labs features are split into two categories:

- Experimental: These features may or may not remain in the product, and errors may or may not be corrected. These experimental features are intended to show you the functionality that could be incorporated into Scalr in the future and to get your feedback. These features are always opt-in, the account/ user can choose to use these features or leave them disabled. Support for these features will be best effort.

- Beta: These features are expected to remain in the product, and errors are expected to be resolved at some point. However, these features may change in detail, and errors may not be fixed at the same speed as with normal features. P0 and P1 should not be opened for beta features.

What does a Terraform version deprecation mean?

On occasion, we will announce a deprecation of a Terraform version. The versions are deprecated based on it no longer being supported by the community. What does it mean when a version is deprecated in Scalr:

- It can continue to be used by workspaces that already have it defined.

- Existing workspaces cannot switch to a deprecated version.

- It can not be used for new workspaces.

- The Scalr team does not guarantee it will continue to work in the scalr.io platform.

If you are using a deprecated version. We highly recommend migrating off of it as soon as possible.

Current deprecated versions:

- 0.12.x

- 0.13.x

Updated 5 days ago